Paedophiles are brazenly sharing child pornography pictures and videos on Facebook, and internet safety experts believe the social networking site has failed to curtail the practice.

Subscribe now for unlimited access.

$0/

(min cost $0)

or signup to continue reading

In an investigation, this website established that there are countless profiles and groups created by paedophiles – sometimes using their real names – who publish child pornography images and videos on the site.

Frustrated at the lack of action from Facebook, a group of concerned netizens from around the world, including at least one in Australia, have launched a vigilante group dubbed Social Network Safety Watch (SoNeSaW).

Most people use Facebook to network with, and seek out, people who have similar interests and the same applies to child predators, who use the site for validation and reassurance that their morbid fantasies are "normal".

Paedophiles add a series of keywords to their profiles – such as "lolita", "hussyfan", "r@ygold" and "PTHC" (pre-teen hardcore) – which helps them find each other on the site using the built-in search feature.

"Thanks to my new friends for helping me realise that I am more normal than I thought I was," reads a wall post by one user, who talks about abusing his "gorgeous" 10-year-old daughter.

"So this is where we let it all hang out and can finally talk openly and share with like-minded people."

The user lists his activities as "daughters", "lolitas" and "I love to play with my daughter".

Countless examples of child porn

Facebook predators uncovered in researching this story, including some users who list their location as Australia, were referred to the Australian Federal Police for investigation.

The predators often pose as children by using pictures of children as their profile photos and listing on their profiles that they are still in school.

This helps them convince their victims to send them naked photos of themselves. The Gladstone Observer has reported on a recent case in which a Sydney man tried to befriend a 13-year-old girl and to convince her to play "master and slaves" with him.

Facebook, which has to police more than 500 million users, cannot keep up with the child pornography trade on its site. Whenever its security team deletes a profile or removes images, they reappear almost instantly.

Facebook too slow to respond

Members of the SoNeSaW vigilante group create dummy Facebook profiles and infiltrate the child porn networks. Many of the vilest images and videos are traded in high-level hidden groups, which users need to be invited to.

"The profiles have been reported to FB by many members of our reporting groups ... and some have been disabled by FB eventually however these profiles have simply been remade within hours and have come back and continue to do the same thing," an Australian SoNeSaW member said.

"It takes usually 50-100 of us to report a profile before it is removed, and this can still take several days. Then they come back and we report again and it takes a long time to get rid of them again – it's just not good enough."

She said she hoped countries would pressure the US government to lay charges against Facebook for "criminal negligence" in hosting child porn groups and enabling the swapping of child porn.

The group also reports child predators to police but there are significant issues in identifying the users and their location and then notifying police in the correct jurisdiction.

A US member of the SoNeSaW group, who did not want to be identified, has set up a blog, facebookwatcher.blogspot.com, on which she catalogues some of the child predator profiles she finds.

"The blog is just the tip of the iceberg ... Facebook's efforts to deal with this have been underwhelming but the problem is bigger than just them," she said in a phone interview.

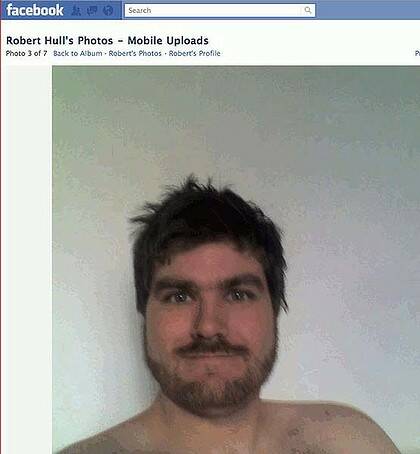

The US member's vigilance has paid off in at least one instance. Robert Hull, 35, from Idaho, who regularly featured on the Facebookwatcher blog, was arrested this month on charges of "production and transportation of sexually explicit images of minors", the US Attorney's Office announced.

Hull allegedly victimised a four-year-old girl to produce the images and his interests listed on his Facebook profile include "2 to 12yo girls" and "playing with my little girl".

In August, this website revealed that Facebook management failed repeatedly to reveal the activity of an international child pornography syndicate operating on the site and ignored continuing admissions by one of the ring's Australian members.

The next day, the site agreed to pass information about criminal activity on its site to Australian police more quickly. However, the Facebook trade in illegal child pornography, including by Australians, continues unabated.

Facebook has also been criticised for being too slow to remove other vile content, such as gruesome pictures of corpses that are published by pranksters on tribute pages dedicated to teens who have died.

A decreased focus on safety

Rod Nockles, former director of safety at MySpace Australia and now a vice-president with e-safety education provider i-Safe, said Facebook and MySpace had failed to deal with child pornography because they did not resource their security teams adequately.

"As they've struggled to monetise the sites and get the sort of return they're looking for, the initial big investments in safety and security have been significantly wound back," he said in a phone interview.

"This means that as new threats like paedophile rings become more sophisticated and develop means of defeating existing safety measures, the safety and security of the sites become increasingly compromised.

"Increasingly their focus in the safety area is one of legal compliance with minimum standards that fail to keep up with the changing nature of the threat. While maintaining safety will always be a challenge given the dynamic nature of the environment; the tardy approach being adopted by all major networking sites is indefensible."

But experts spoken to by this website agree that the child porn problem is difficult to address, as the predators use a variety of tools to mask their IP addresses and get around any bans.

The Australian Federal Police reiterated its demand that Facebook consider employing a "law enforcement liaison officer" in Australia and said Facebook should constantly scan for child exploitation material and inform law enforcement of their findings.

Facebook said it was in the process of hiring a communications and public policy specialist in Australia and part of that role would involve security.

"Technology reliance combined with the reach and speed of the internet allows criminals to operate from international locations with limited regulation and legislation," a federal police spokesman said.

A spokeswoman for Communications Minister Stephen Conroy said Facebook had responsible use policies to remove offensive content and the government expected them to be enforced.

The government was consulting with Facebook to "strengthen these processes particularly regarding the length of time taken to act".

Facebook's filtering mechanisms – but do they work?

Facebook's chief security officer Joe Sullivan said that, when the site identified child pornography it immediately disabled the relevant accounts and filed "detailed reports" with the US-based international clearinghouse, the National Centre for Missing and Exploited Children (NCMEC).

NCMEC is charged with distributing those reports to the appropriate law enforcement agencies around the world.

Mr Sullivan said the site did not just rely on user reports to weed out child predators and had developed technology systems to seek out the material. This included "image screening, keyword filtering, pattern analysis, known bad URL blocking and name-based reviews for repeat violators".

"We've developed our lists of known bad images, known bad keywords, known bad URLs and known repeat violators through close collaboration with law enforcement agencies, from the FBI to the AFP and Interpol," he said.

It is not clear why Facebook's automatic keyword detection mechanisms have not been able to pick up many of the key terms, such as PTHC, used in child predator profiles.

Mr Sullivan noted that, while Facebook had tools to block child pornography, these did not exist elsewhere on the web so "we feel Facebook is much safer than the rest of the web".

"Just like in the offline, real world, there are instances of inappropriate and even criminal behaviour online," Mr Sullivan said.

"There is no single answer to eradicating predators in real life, nor is there on the internet."

The different types of paedophile

The Australian Institute of Criminology recently published the results of a small-scale study into the online interactions of suspected paedophiles with undercover Australian police officers posing as male children.

It found differences between how predators approach boys and girls online. With female children sexual chat was initiated almost immediately and this was often structured toward domination with the predators using blackmail and threatening behaviour to help achieve their short-term sexual gratification.

With male children, the predators' behaviour was geared towards establishing mutual respect and trust. They slowly inquired about the boy's sexual experience and physical body and often sent them pornography as a way of desensitising them.

The offenders often preyed on boys who were confused about their sexuality and groomed them over long periods with a view to meeting them in the real world.

This had implications for law enforcement, the study found, as many child porn stings were more short-term operations geared towards predators who targeted females.

"A tendency to focus on securing a quick arrest, rather than engaging in protracted interactions may be excluding some offenders from arrest, although they may represent an equal or great danger to children," the study found.